Description

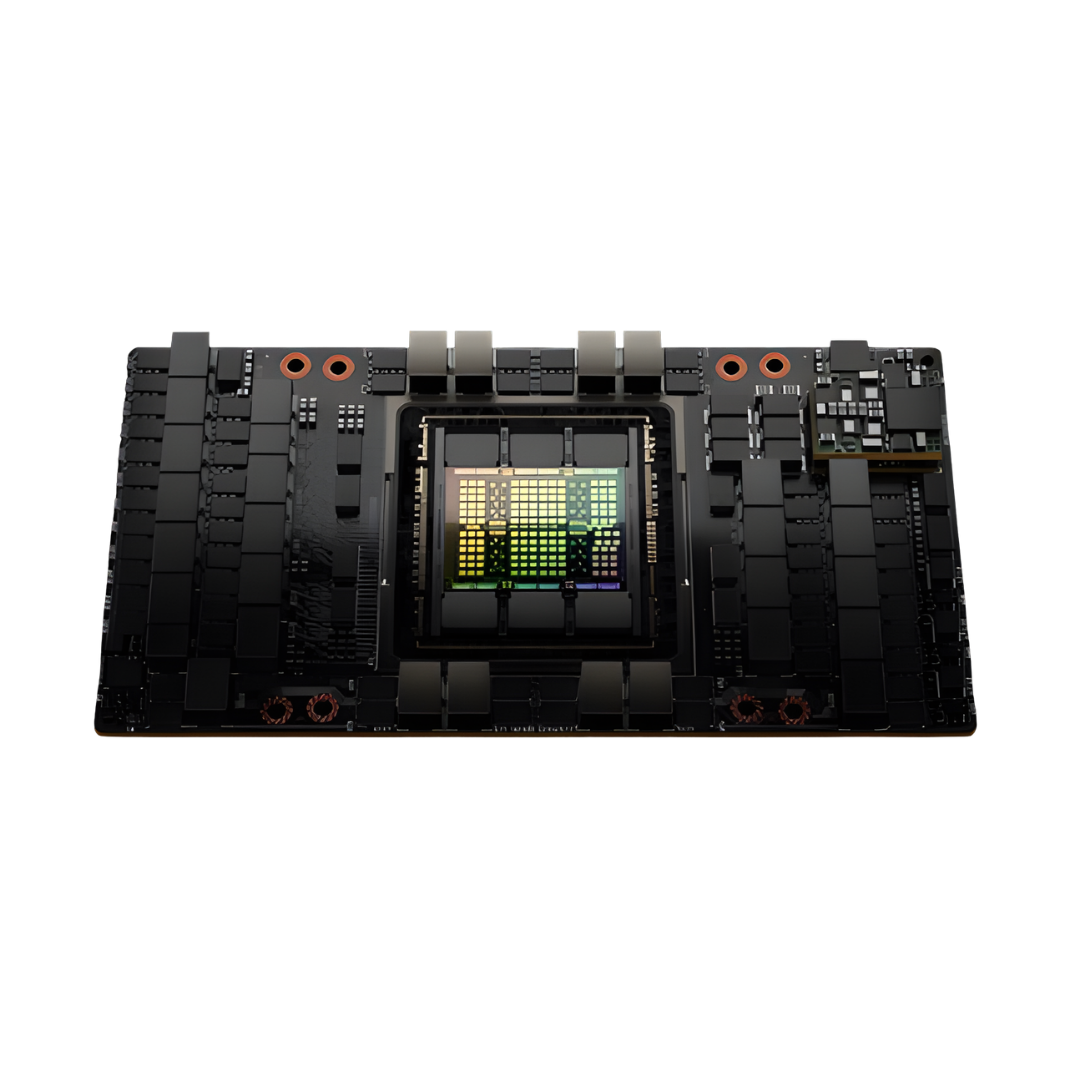

| Specifications | H100 SXM | H100 NVL |

|---|---|---|

| FP64 | 34 teraFLOPS | 68 teraFLOPS |

| FP64 Tensor Core | 67 teraFLOPS | 134 teraFLOPS |

| FP32 | 67 teraFLOPS | 134 teraFLOPS |

| TF32 Tensor Core* | 989 teraFLOPS | 1,979 teraFLOPS |

| BFLOAT16 Tensor Core* | 1,979 teraFLOPS | 3,958 teraFLOPS |

| FP16 Tensor Core* | 1,979 teraFLOPS | 3,958 teraFLOPS |

| FP8 Tensor Core* | 3,958 teraFLOPS | 7,916 teraFLOPS |

| INT8 Tensor Core* | 3,958 TOPS | 7,916 TOPS |

| GPU Memory | 80GB | 188GB |

| GPU Memory Bandwidth | 3.35TB/s | 7.8TB/s |

| Decoders | 7 NVDEC 7 JPEG |

14 NVDEC 14 JPEG |

| Max Thermal Design Power (TDP) | Up to 700W (configurable) | 700-800W (configurable) |

| Multi-Instance GPUs | Up to 7 MIGS @ 10GB each | Up to 14 MIGS @ 12GB each |

| Form Factor | SXM | PCIe dual-slot air-cooled |

| Interconnect | NVIDIA NVLink®: 900GB/s; PCIe Gen5: 128GB/s |

NVIDIA NVLink: 600GB/s; PCIe Gen5: 128GB/s |

| Server Options | NVIDIA HGX H100 Partner and NVIDIA-Certified Systems™ with 4 or 8 GPUs; NVIDIA DGX H100 with 8 GPUs |

Partner and NVIDIA-Certified Systems with 1-8 GPUs |

| NVIDIA AI Enterprise | Add-on | Included |

Reviews

There are no reviews yet.